In the railways, safety critical software applications are usually developed and validated independently from the parameters or constant data that fine-tune their behavior.

CLEARSY Data Solver

For example, the track topology, signal and point positions, kilometer points, etc. are constant data used by an automatic pilot to compute braking curves and to determine when to trigger the emergency brake.

So, each part must be safe at the same level: SIL4.

Data validation process consists in ensuring that the data set is correct. For example: in ERTMS standard, tracks are equipped with signals and beacons. Rules to verify are related to the topology: each signal should have an associated beacon group, distance between signal and its beacon group should be less than 2 meters, beacons should be less than 2 meters apart from each other’s. Other rules to verify are related to the content of the messages sent by the beacons to the trains (distances, gradients, speeds…).

Data validation process

Manual data validation process used to be entirely human, leading to painful, error-prone, long-term activities (requiring several months to check manually up to 100,000 items of data against 1,000 rules). Formal data validation process is the natural evolution of this human-based process into a secure one. This approach has been invented by CLEARSY, thanks to its deep knowledge and skills on formal method technology and associated tools.

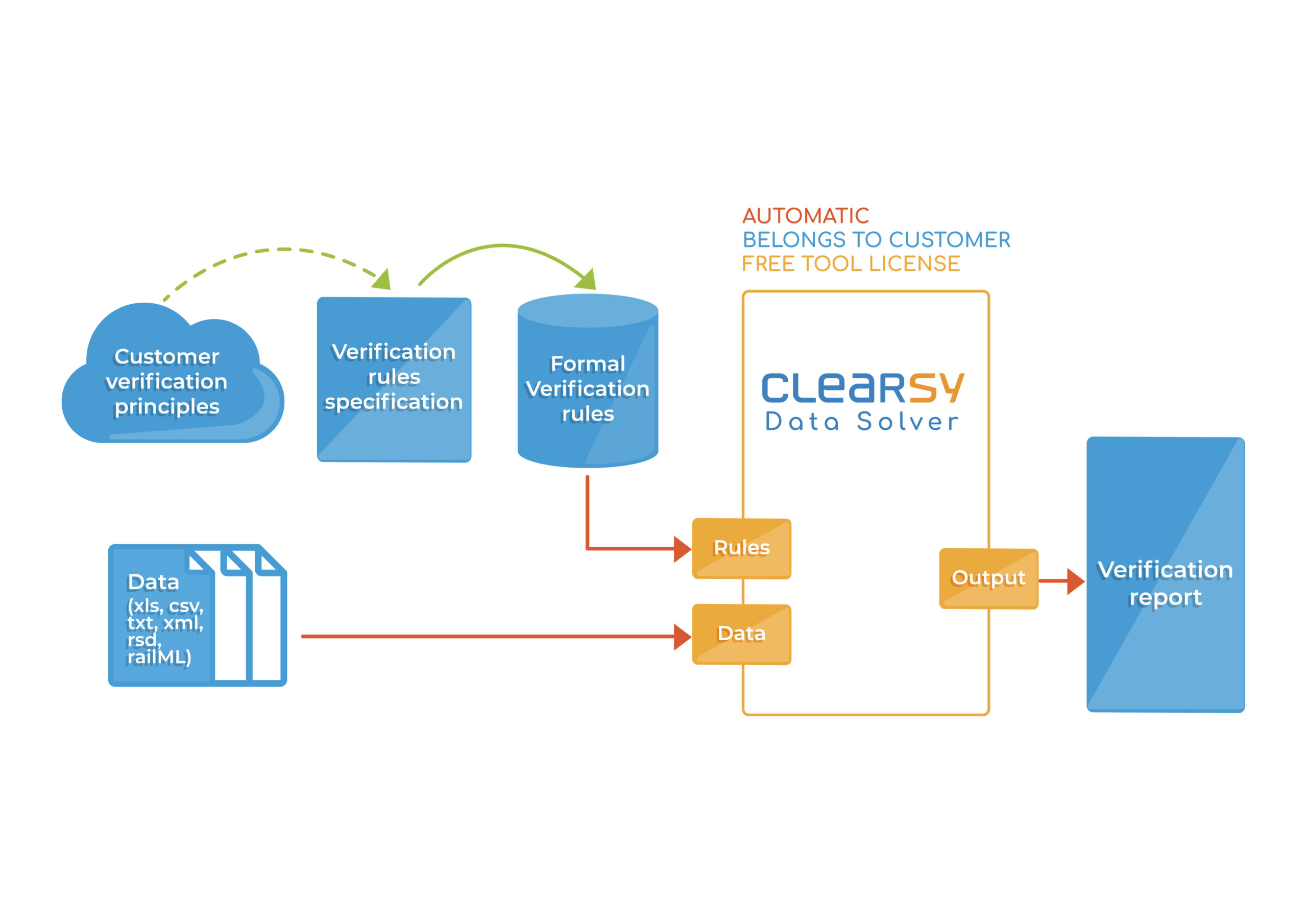

Formal Data validation tool.

CLEARSY provides a data validation tool and associated services. The benefits of this formal approach are diverse:

- It is fast: up to 100x faster than a pure human verification, a couple of hours are enough for validating a complete railway project

- It is automatic, exhaustive, push-button and repeatable at will (avoids fastidious non-regression phase)

- It removes human errors, as it makes use of certified formal techniques

- It allows a strong reuse from one project to another (capitalization of the knowledge)

- Especially targets railway projects such as CBTC, ERTMS, IXL, RBC, ATS…

Product description

A customized tool

- Adapted to the customers data file format (xml, xls, parameter file, railML, csv, txt…)

- Adapted to the customers verification report documents and process

- Can be used by the End User

A service of formal rules modelling

The tool is a solver: that means the data set is verified, rule by rule on all the data concerned. These project rules can be developed by the customer himself or by CLEARSY. We propose this service:

- Modelling the formal rules to be satisfied by the data concerned.

- These formal rules are easily readable after training.

- The rules belong to the customer and can be reused at will, all along the different projects or the different versions of a product.

End users can also specify their own rules and use the tool by themselves.

Commercial references

The customers are railway or subway operators, or industrial companies.

|

|

|

|

|

|

Link to this offer

Linked to this offer

Linked to this offer

- • Safety is not an option: it is a requirement

- • New T2 for SIL4-Certified Version of the CLEARSY Data Solver – Now Even More...

- • CLEARSY at FM2024

- • CLEARSY’s expertise on RATP line 14 for the 2024 Olympic Games

- • Extension of Line 14 of the Paris Metro: over 25 years of reliability thanks to ...

- • CLEARSY joins forces with The Neat Company to integrate the CLEARSY Data Solver ...

- • CLEARSY an innovative player in saving little-used rail lines

- • Version 4 of the ETCS specification has just been published

- • A certified version of ATELIER B is planned for 2024

- • Formal activities through the V cycle

- • CLEARSY will attend the ERTMS 2022 Conference

- • The safety-critical data validation tool is certified T2 SIL4.

- • Formal methods for validating parameterization data: CLEARSY is chosen

- • Certified T2, the CLEARSY DATA SOLVER tool speeds up the data validation process

- • CLEARSY carries out the formal validation of data from SNCF Réseau’s...

- • Technical seminar Newcastle University

- • Formal methods in action in the railways

- • Safety-critical Systems Symposium

- • CLEARSY participated in the prestigious International Conference on Formal Metho...

- • Newcastle University Technical Seminar

- • CAI 2013 Conference

- • Formal Data Validation Tutorial at ABZ 2014, Toulouse

- • Data Validation & Reverse Engineering

- • ProB: a model-checker for data validation

- • PredicateB: a predicate animator

- • Data validation in the Railways

- • Introduction to data validation

Link to this offer